It’s been a while since the last post here. In compensation, it’s not been a bad year in terms of getting some research out there. First, we finally managed to publish “Civic Tech in the Global South: Assessing Technology for the Public Good.” With a foreword by Beth Noveck, the book is edited by Micah Sifry and myself, with contributions by Evangelia Berdou, Martin Belcher, Jonathan Fox, Matt Haikin, Claudia Lopes, Jonathan Mellon and Fredrik Sjoberg.

It’s been a while since the last post here. In compensation, it’s not been a bad year in terms of getting some research out there. First, we finally managed to publish “Civic Tech in the Global South: Assessing Technology for the Public Good.” With a foreword by Beth Noveck, the book is edited by Micah Sifry and myself, with contributions by Evangelia Berdou, Martin Belcher, Jonathan Fox, Matt Haikin, Claudia Lopes, Jonathan Mellon and Fredrik Sjoberg.

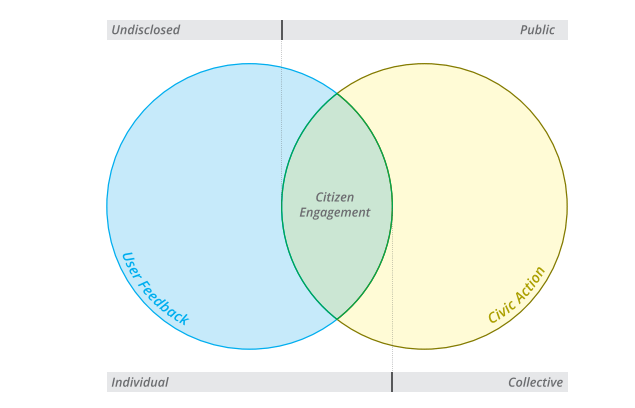

The book is comprised of one study and three field evaluations of civic tech initiatives in developing countries. The study reviews evidence on the use of twenty-three information and communication technology (ICT) platforms designed to amplify citizen voices to improve service delivery. Focusing on empirical studies of initiatives in the global south, the authors highlight both citizen uptake (yelp) and the degree to which public service providers respond to expressions of citizen voice (teeth). The first evaluation looks at U-Report in Uganda, a mobile platform that runs weekly large-scale polls with young Ugandans on a number of issues, ranging from access to education to early childhood development. The following evaluation takes a closer look at MajiVoice, an initiative that allows Kenyan citizens to report, through multiple channels, complaints with regard to water services. The third evaluation examines the case of Rio Grande do Sul’s participatory budgeting – the world’s largest participatory budgeting system – which allows citizens to participate either online or offline in defining the state’s yearly spending priorities. While the comparative study has a clear focus on the dimension of government responsiveness, the evaluations examine civic technology initiatives using five distinct dimensions, or lenses. The choice of these lenses is the result of an effort bringing together researchers and practitioners to develop an evaluation framework suitable to civic technology initiatives.

The book was a joint publication by The World Bank and Personal Democracy Press. You can download the book for free here.

Women create fewer online petitions than men — but they’re more successful

Another recent publication was a collaboration between Hollie R. Gilman, Jonathan Mellon, Fredrik Sjoberg and myself. By examining a dataset covering Change.org online petitions from 132 countries, we assess whether online petitions may help close the gap in participation and representation between women and men. Tony Saich, director of Harvard’s Ash Center for Democratic Innovation (publisher of the study), puts our research into context nicely:

The growing access to digital technologies has been considered by democratic scholars and practitioners as a unique opportunity to promote participatory governance. Yet, if the last two decades is the period in which connectivity has increased exponentially, it is also the moment in recent history that democratic growth has stalled and civic spaces have shrunk. While the full potential of “civic technologies” remains largely unfulfilled, understanding the extent to which they may further democratic goals is more pressing than ever. This is precisely the task undertaken in this original and methodologically innovative research. The authors examine online petitions which, albeit understudied, are one of the fastest growing types of political participation across the globe. Drawing from an impressive dataset of 3.9 million signers of online petitions from 132 countries, the authors assess the extent to which online participation replicates or changes the gaps commonly found in offline participation, not only with regards to who participates (and how), but also with regards to which petitions are more likely to be successful. The findings, at times counter-intuitive, provide several insights for democracy scholars and practitioners alike. The authors hope this research will contribute to the larger conversation on the need of citizen participation beyond electoral cycles, and the role that technology can play in addressing both new and persisting challenges to democratic inclusiveness.

But what do we find? Among other interesting things, we find that while women create fewer online petitions than men, they’re more successful at it! This article in the Washington Post summarizes some of our findings, and you can download the full study here.

Other studies that were recently published include:

The Effect of Bureaucratic Responsiveness on Citizen Participation (Public Administration Review)

Abstract:

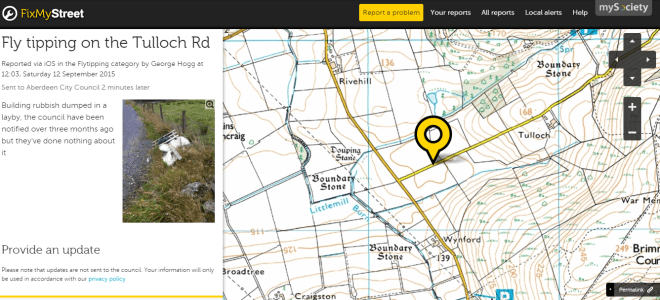

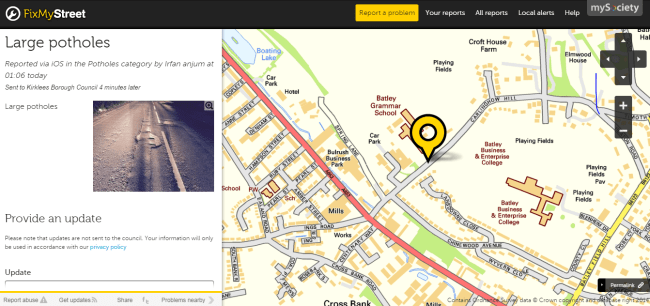

What effect does bureaucratic responsiveness have on citizen participation? Since the 1940s, attitudinal measures of perceived efficacy have been used to explain participation. The authors develop a “calculus of participation” that incorporates objective efficacy—the extent to which an individual’s participation actually has an impact—and test the model against behavioral data from the online application Fix My Street (n = 399,364). A successful first experience using Fix My Street is associated with a 57 percent increase in the probability of an individual submitting a second report, and the experience of bureaucratic responsiveness to the first report submitted has predictive power over all future report submissions. The findings highlight the importance of responsiveness for fostering an active citizenry while demonstrating the value of incidentally collected data to examine participatory behavior at the individual level.

Abstract:

Do online and offline voters differ in terms of policy preferences? The growth of Internet voting in recent years has opened up new channels of participation. Whether or not political outcomes change as a consequence of new modes of voting is an open question. Here we analyze all the votes cast both offline (n = 5.7 million) and online (n = 1.3 million) and compare the actual vote choices in a public policy referendum, the world’s largest participatory budgeting process, in Rio Grande do Sul in June 2014. In addition to examining aggregate outcomes, we also conducted two surveys to better understand the demographic profiles of who chooses to vote online and offline. We find that policy preferences of online and offline voters are no different, even though our data suggest important demographic differences between offline and online voters.

We still plan to publish a few more studies this year, one looking at digitally-enabled get-out-the-vote (GOTV) efforts, and two others examining the effects of participatory governance on citizens’ willingness to pay taxes (including a fun experiment in 50 countries across all continents).

In the meantime, if you are interested in a quick summary of some of our recent research findings, this 30 minutes video of my keynote at the last TicTEC Conference in Florence should be helpful.

aluation Guide for Digital Citizen Engagement

aluation Guide for Digital Citizen Engagement